Measuring what Matters: Construct Validity in Large Language Model Benchmarks

Andrew M Bean,

Ryan Othniel Kearns,

Angelika Romanou,

Franziska Sofia Hafner,

Harry Mayne,

Jan Batzner,

Negar Foroutan,

Chris Schmitz,

Karolina Korgul,

Hunar Batra,

Oishi Deb,

Emma Beharry,

Cornelius Emde,

Thomas Foster,

Anna Gausen,

María Grandury,

Simeng Han,

Valentin Hofmann,

Lujain Ibrahim,

Hazel Kim,

Hannah Rose Kirk,

Fangru Lin,

Gabrielle Kaili-May Liu,

Lennart Luettgau,

Jabez Magomere,

Jonathan Rystrøm,

Anna Sotnikova,

Yushi Yang,

Yilun Zhao,

Adel Bibi,

Antoine Bosselut,

Ronald Clark,

Arman Cohan,

Jakob Foerster,

Yarin Gal,

Scott A Hale,

Inioluwa Deborah Raji,

Christopher Summerfield,

Philip HS Torr,

Cozmin Ududec,

Luc Rocher,

Adam Mahdi

November, 2025

Abstract

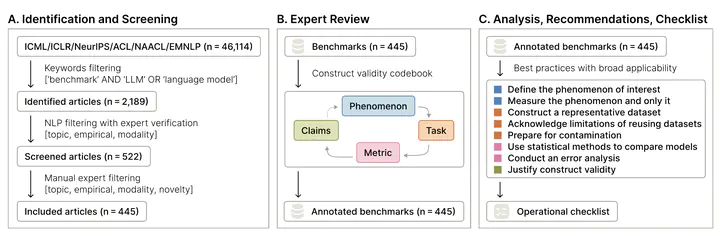

Evaluating large language models (LLMs) is crucial for both assessing their capabilities and identifying safety or robustness issues prior to deployment. Reliably measuring abstract and complex phenomena such as ‘safety’ and ‘robustness’ requires strong construct validity, that is, having measures that represent what matters to the phenomenon. With a team of 29 expert reviewers, we conduct a systematic review of 445 LLM benchmarks from leading conferences in natural language processing and machine learning. Across the reviewed articles, we find patterns related to the measured phenomena, tasks, and scoring metrics which undermine the validity of the resulting claims. To address these shortcomings, we provide eight key recommendations and detailed actionable guidance to researchers and practitioners in developing LLM benchmarks.

Publication

Neural Information Processing Systems

Senior Researcher in Machine Learning and R&D Distinguished Advisor

My research interests include machine learning, computer vision, and optimization.